In the not-so-distant future, when an autonomous vehicle controlled by artificial intelligence collides with a human, who is to blame?

This is a question that pops up at almost every AI conference we’ve attended and we’re still a long way from answering that enquiry definitively.

At the Microsoft AI days happening this week in Johannesburg where the technology giant is engaging with government and business on the topic of AI, we were the one’s to pose this question to Microsoft sales lead for AI solutions in EMEA, Alessio Bagnaresi.

“Artificial intelligence is a technology that promises to revolutionise a variety of industries from healthcare, education, agriculture and transportation, especially in respect of driverless cars. Our aim is to use this technology to change industries and also really try to improve the society,” says Bagnaresi.

That last point is the key driver for Microsoft when it creates AI solutions – improving society.

As the Redmond company sees it, AI should be used to amplify human ingenuity rather than replace it all together.

Take Saqib Shaikh for instance. Shaikh is a blind software engineer at Microsoft that used the firm’s cognitive AI solutions to create an app that relays information about the world to him. This functionality even extends to reading the facial expressions of his colleagues. Take a look at the video below or try out Microsoft’s SeeingAI app for iOS yourself.

This app allows Shaikh to engage with the world in a more meaningful way even though he is blind. As you heard in the video it started with computers being able to talk and that has evolved into something infinitely more useful thanks to his ingenuity.

This is just one example of many that showcases how Microsoft is using AI to amplify the capabilities of people.

How Microsoft develops AI

At this juncture, it may seem like our question has been answered – humans use AI therefore the human is responsible for the faults. Sadly, it’s not as cut and dry as that.

To explain we need to look at how Microsoft develops AI or rather the key pillars it follows when developing solutions for its customers.

The first pillar is fairness. Apologies to our future robot overlords, but in 2018 AI is still very dumb when it enters this world. Like a child it has to learn and while it can learn quickly one must be aware of what it is being taught.

We all remember Tay, the Microsoft AI that turned into a racist, bigoted bot the moment the internet got a hold of it. That incident showcases bias perfectly because Tay’s creators didn’t account for the darker minds on the internet. When programming AI one needs to look at every single angle and while the bot is operating it needs to be monitored constantly to insure that biases are accounted for. Programming with bias will result in a biased output.

On top of this AI must be inclusive, for the sake of the people who will use it and the sake of the firm creating the solution. “There are close on one billion people with disabilities and we need to provide equal opportunities to everybody,” explains Bagnaresi. “We want to use this technology to empower people to do more,” she continues.

Other key considerations when Microsoft develops AI solutions include reliability and safety.

Privacy and security are rather important as well. The anonymisation of data is vital in a world were so much personal information is available and for its part Microsoft is re-engineering all of its software to comply with global regulations such as GDPR. It’s also an ethically sound practice that feeds into one of the more important pillars of development – transparency.

Because AI is able to gather and learn from thousands upon thousands of data points easily, it’s vital to inform customers about what the AI is doing with that information. More and more people are starting to understand the value of their data and want it to be protected. Facebook is learning this the hard way as it tries to make its users safe by investing in information protection but this has had the knock-on effect of losing the firm money and investor confidence.

And finally, on to the question at hand – who is accountable for AI?

One would hope that we are closer to answering that question following the accident involving an autonomous Uber vehicle earlier this year but we really aren’t.

“That is a topic of big debate” says Bagnaresi answering the question about who is responsible for an accident involving a self driving vehicle. “That is one of the reasons these technologies are not yet commercially available. That is an area that needs to be regulated with laws before anything enters a production environment,” she adds.

Simply put, the laws that will govern AI will likely come from policy makers which is a bit concerning given some US Senators don’t know how Facebook makes money.

Microsoft is also working with firms such as IBM and Google in a bid to understand why AI makes the decisions it does.

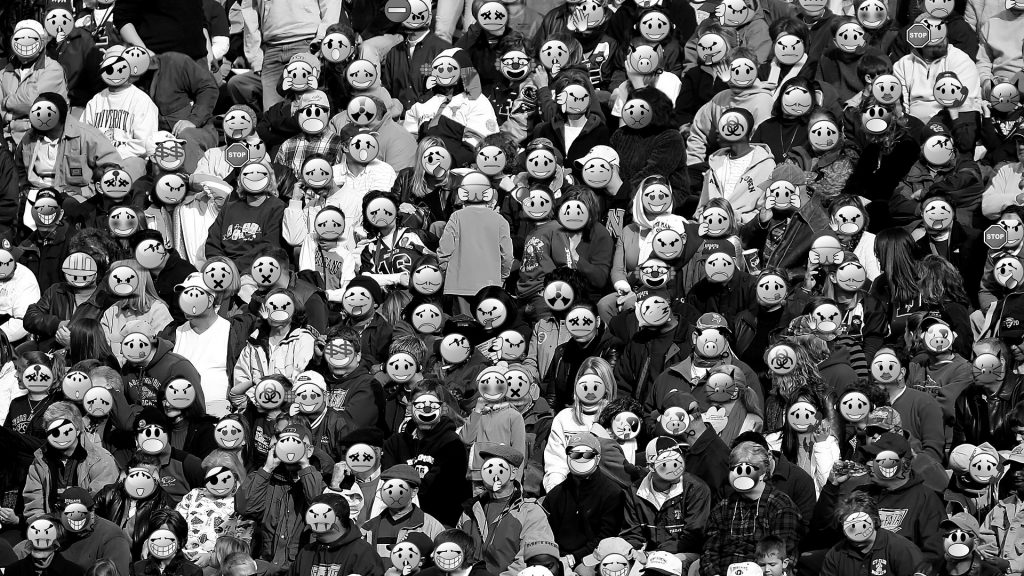

The underlying message within Bagnaresi’s talk is that diversity is key to developing AI. No one person can account for every bias in the world and that’s okay but a firm must consider the impact its AI solution could have on everybody or run the risk of having a chat bot turn into Tay.

[Image – CC 0 Pixabay]