Twitter has updated its hateful conduct policy in a bid to make the rules a bit clearer about what exactly constitutes hate speech.

The latest set of updates now prohibits language that dehumasies people on the basis of their race, ethnicity or national origin.

“In July 2019, we expanded our rules against hateful conduct to include language that dehumanizes others on the basis of religion or caste. In March 2020, we expanded the rule to include language that dehumanizes on the basis of age, disability, or disease,” Twitter explained in a blog post.

With these new rules, content which contains slurs as outlines above, will be immediately removed from Twitter.

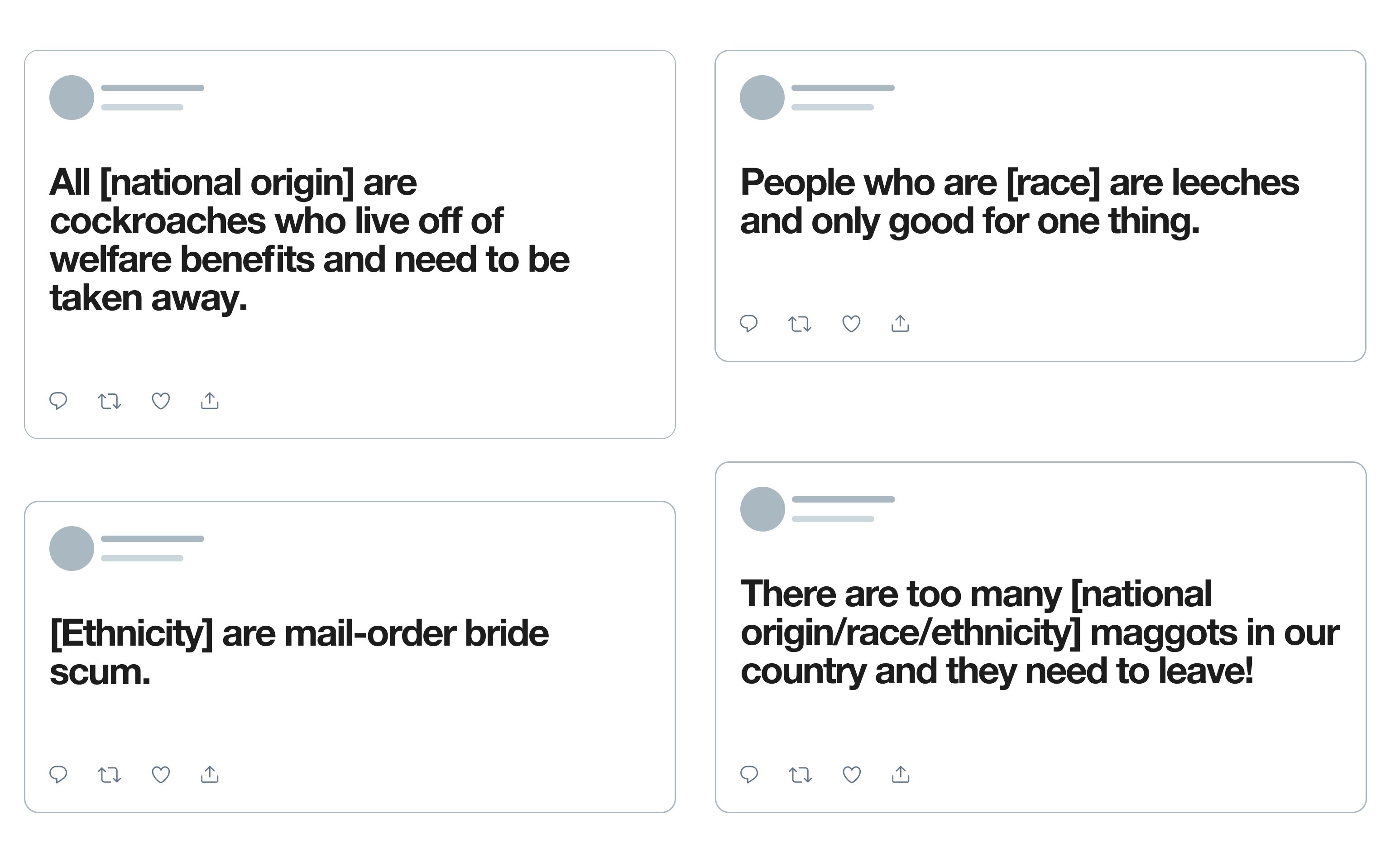

Twitter also included examples of tweets which would be removed according to this updated policy.

So why is this update happening? Surely Twitter’s rules were clear beforehand? While that is the assumption, that isn’t the case.

So why is this update happening? Surely Twitter’s rules were clear beforehand? While that is the assumption, that isn’t the case.

Following feedback from its community, Twitter learned that it needed to be clearer about what exactly constitutes hate speech. This means Twitter had to provide context for what is considered a violation of the rules.

Twitter also learned that its use of “identifiable groups” was too broad.

“Respondents said that ‘identifiable groups’ was too broad, and they should be allowed to engage with political groups, hate groups, and other non-marginalized groups with this type of language. Many people wanted to ‘call out hate groups in any way, any time, without fear.’ In other instances, people wanted to be able to refer to fans, friends, and followers in endearing terms, such as ‘kittens’ and ‘monsters’,” Twitter wrote.

The third learning is perhaps the most important – consistent enforcement.

Twitter says it has improved training so that its teams are better equipped to enforce its policies fairly and consistently.

Whether these updates will help in fighting hate speech on Twitter remains to be seen but at least Twitter is actively updating its policy based on feedback.

[Source – Twitter]