- Instagram is testing a new feature that blurs any images that may feature nudity on the social media platform.

- The new feature is designed to address potential sextortion, as well as protect younger users from seeing sexually explicit content.

- It will be set as default on any user’s account who is younger than 18 years old.

Meta says it has been tackling sextortion across its social media platforms for a few years now, and is now rolling out some new tools on Instagram in particular to address this.

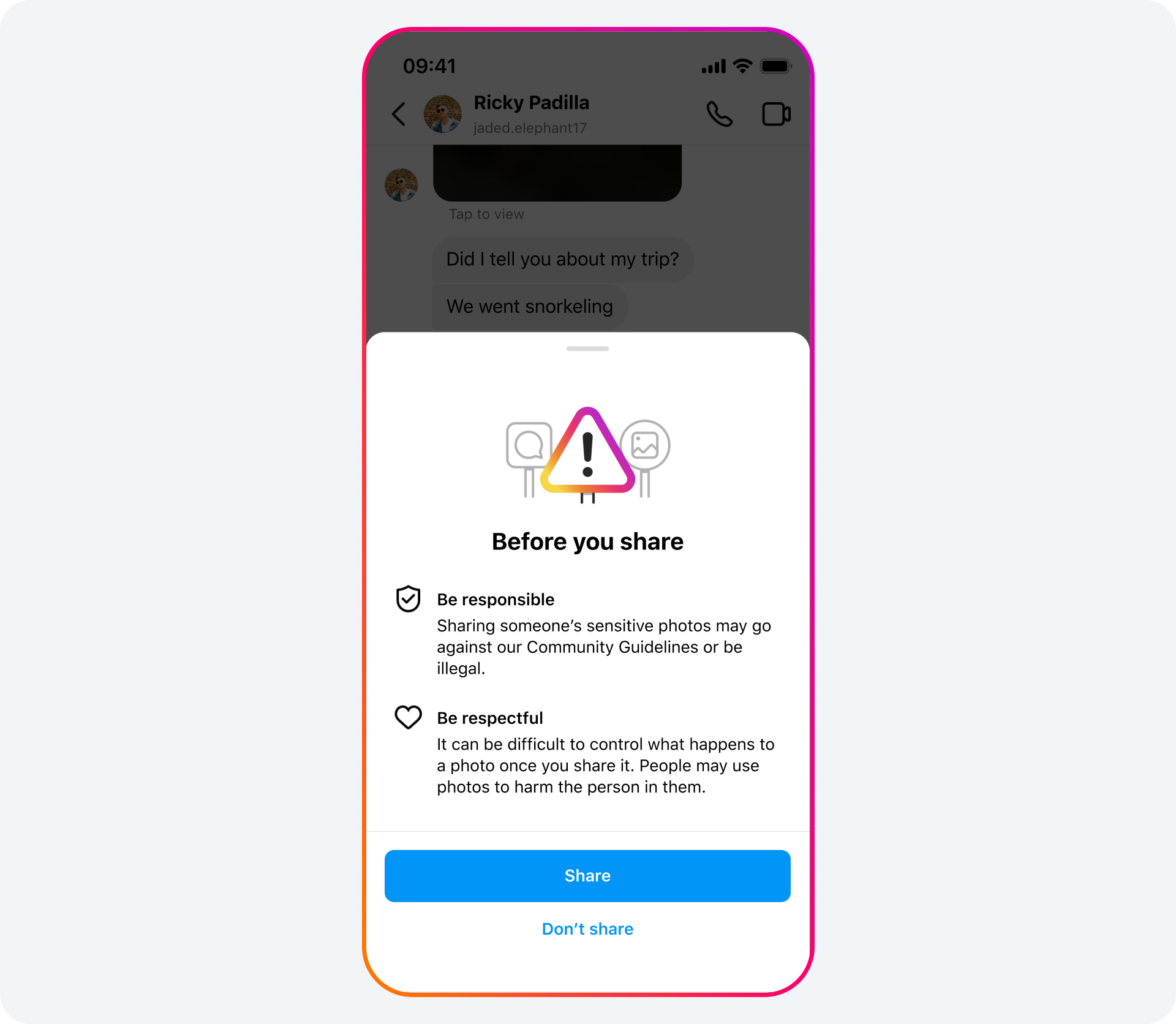

It is looking at ways to make users think twice before sharing any images that contain nudity on the platform, with a blur function being added among other new tools that it is testing out.

“Today, we’re sharing an overview of our latest work to tackle these crimes. This includes new tools we’re testing to help protect people from sextortion and other forms of intimate image abuse, and to make it as hard as possible for scammers to find potential targets – on Meta’s apps and across the internet. We’re also testing new measures to support young people in recognizing and protecting themselves from sextortion scams,” it wrote in a blog post.

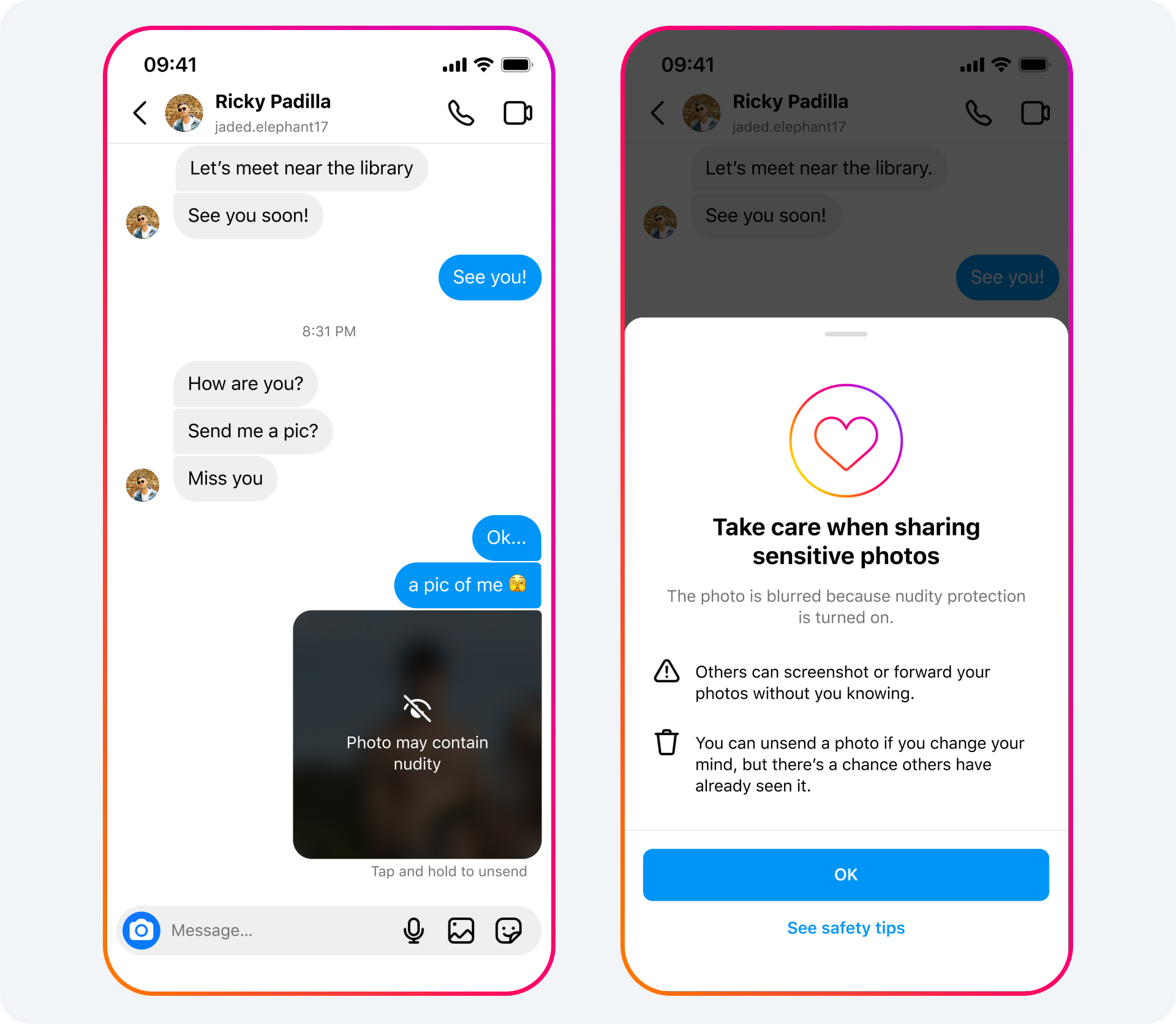

“We’ll soon start testing our new nudity protection feature in Instagram DMs, which blurs images detected as containing nudity and encourages people to think twice before sending nude images. This feature is designed not only to protect people from seeing unwanted nudity in their DMs, but also to protect them from scammers who may send nude images to trick people into sending their own images in return.

Meta adds that the nudity protection feature will remain default for any under 18 user’s account, and it will also ask adult users to toggle the feature on in a bid to limit the amount of nudes being shared on the platform.

“When nudity protection is turned on, people sending images containing nudity will see a message reminding them to be cautious when sending sensitive photos, and that they can unsend these photos if they’ve changed their mind,” it explained.

“When someone receives an image containing nudity, it will be automatically blurred under a warning screen, meaning the recipient isn’t confronted with a nude image and they can choose whether or not to view it. We’ll also show them a message encouraging them not to feel pressure to respond, with an option to block the sender and report the chat,” Meta continued.

While some may argue that this is overkill, one only needs to spend a few minutes scrolling on other social media platforms like X to see just how much nude and sexually explicit content is being shared, much of which is unavoidable and therefore concerning if a minor is using said platform.

The issues around sextortion, scamming, and desensitisation also become issues when such content is shared rampantly.

As these new tools are still being tested out, it will be interesting to see how users react, and what kind of impact it has to the overall experience on Instagram itself.