In February Google and Jigsaw announced Perspective, a tool that would help publishers sort through toxic comments.

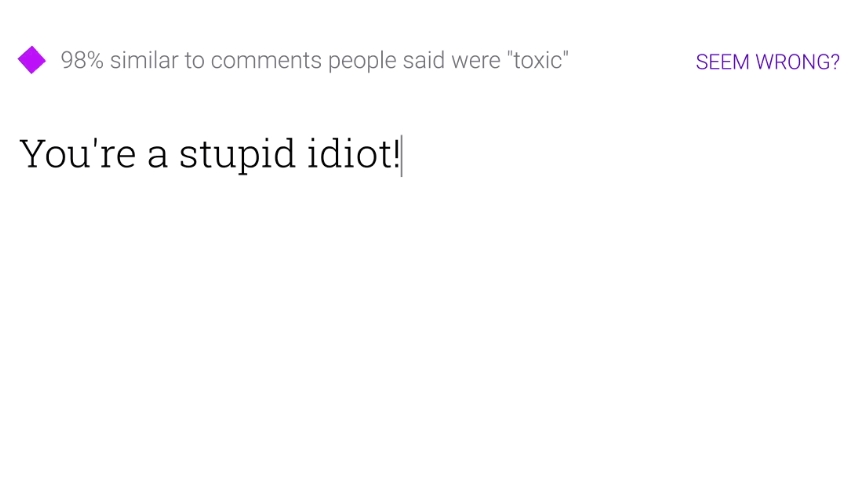

However, new research coming out of the University of Washington’s Network Security Lab has revealed that a few typos could fool the system.

The team found that while a sentence which reads “they are liberal idiots who are uneducated” received a toxicity rating of 90%.

When adding a few full stops in places they shouldn’t be so that the sentence read, “they are liberal i.diots who are un.educated” the toxicity rating dipped down to 15%.

The researchers also found that sentences which contained so-called toxic words in a non-abusive context were also flagged. Comments which read “not an idiot” scored as high for toxicity as “idiot” did in a report by Ars Technica.

Perspective product manager, CJ Adams told Ars Technica that while research such as this is important and welcomed, it should be taken with a grain of salt.

“Perspective is still a very early-stage technology, and as these researchers rightly point out, it will only detect patterns that are similar to examples of toxicity it has seen before,” explained Adams.

“The API allows users and researchers to submit corrections like these directly, which will then be used to improve the model and ensure it can to understand more forms of toxic language, and evolve as new forms emerge over time,” he concluded.

[Via – Ars Technica]